TrueSkill: Microsoft's Rating System for Xbox Live

We’ve explored Elo and the Glicko family of rating systems so far. Now it’s time to dive into a more modern approach: Microsoft’s TrueSkill system.

Developed for Xbox Live matchmaking, TrueSkill represents a significant advancement in rating system design, incorporating Bayesian inference to handle uncertainty in a sophisticated way.

The Origin Story: From Chess to Xbox

TrueSkill was developed by Microsoft Research for use in Xbox Live’s matchmaking service. Development began around 2005-2006, with the formal algorithm published in 2007 (Herbrich et al.). It was designed to address several limitations of existing rating systems:

- The need to handle team-based games, not just 1v1 matches

- The challenge of accurately rating players after very few games

- The desire to create balanced, enjoyable matches for players of all skill levels

- The ability to handle matches with more than two players or teams

While Elo and Glicko were designed primarily for chess, TrueSkill was built from the ground up for modern video games, where players might participate in team-based competitions, free-for-all matches, or other complex competitive formats.

How TrueSkill Works: Bayesian Skill Modeling

TrueSkill takes a fundamentally different approach than Elo or Glicko. Rather than representing a player’s skill as a single number, it models skill as a probability distribution:

Each player’s skill is represented by a Gaussian (normal) distribution with two parameters:

- μ (mu): The mean, representing the player’s estimated skill

- σ (sigma): The standard deviation, representing uncertainty about that estimate

After each match, both parameters are updated using Bayesian inference:

- μ changes based on match outcome (win/loss)

- σ decreases as more information is gathered (more games played)

For matchmaking, TrueSkill uses a “conservative” skill estimate:

- Matchmaking Rating = μ - 3σ

- This ensures players are matched based on a conservative lower bound of their skill

This approach allows TrueSkill to be cautious with new players (high σ) while quickly adapting as more information becomes available.

Implementing TrueSkill with Elote

Let’s see how TrueSkill works in practice with Elote:

from elote import TrueSkillCompetitor

# Create two competitors

player_a = TrueSkillCompetitor()

player_b = TrueSkillCompetitor()

# Check initial ratings

print(f"Initial ratings - Player A: μ={player_a.mu}, σ={player_a.sigma}")

print(f"Initial ratings - Player B: μ={player_b.mu}, σ={player_b.sigma}")

# Record a match result

player_a.beat(player_b)

# See how ratings changed

print(f"After Player A beats Player B - Player A: μ={player_a.mu}, σ={player_a.sigma}")

print(f"After Player A beats Player B - Player B: μ={player_b.mu}, σ={player_b.sigma}")

Notice how both μ and σ change after the match. Player A’s μ increases (indicating higher skill) while their σ decreases (indicating more certainty). The opposite happens for Player B.

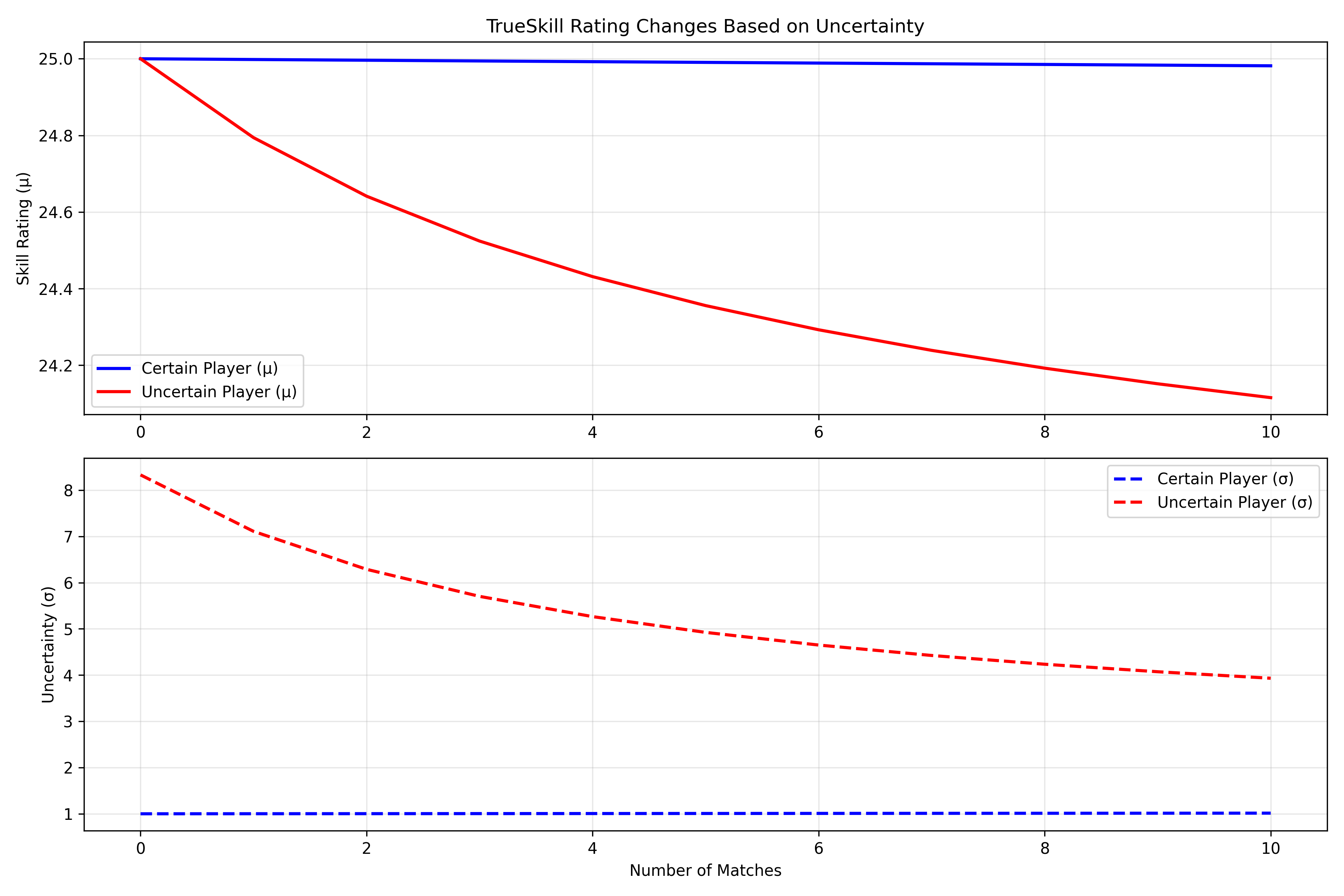

Visualizing TrueSkill: Conservative Rating Updates

One of TrueSkill’s key features is its conservative approach to rating updates. Let’s visualize this:

import matplotlib.pyplot as plt

import numpy as np

import os

# Create players with different experience levels

new_player = TrueSkillCompetitor() # Default μ=25, σ=8.33

experienced_player = TrueSkillCompetitor(mu=25, sigma=2) # Same skill, but more certain

# Record initial ratings

new_player_ratings = [(new_player.mu, new_player.sigma)]

experienced_player_ratings = [(experienced_player.mu, experienced_player.sigma)]

# Simulate a series of matches where the new player wins

for _ in range(10):

new_player.beat(experienced_player)

new_player_ratings.append((new_player.mu, new_player.sigma))

experienced_player_ratings.append((experienced_player.mu, experienced_player.sigma))

# Plot the results

plt.figure(figsize=(12, 6))

# Plot μ changes

plt.subplot(1, 2, 1)

plt.plot([r[0] for r in new_player_ratings], label='New Player μ')

plt.plot([r[0] for r in experienced_player_ratings], label='Experienced Player μ')

plt.xlabel('Number of Matches')

plt.ylabel('μ (Skill Estimate)')

plt.title('TrueSkill μ Changes Over Time')

plt.legend()

plt.grid(True, alpha=0.3)

# Plot σ changes

plt.subplot(1, 2, 2)

plt.plot([r[1] for r in new_player_ratings], label='New Player σ')

plt.plot([r[1] for r in experienced_player_ratings], label='Experienced Player σ')

plt.xlabel('Number of Matches')

plt.ylabel('σ (Uncertainty)')

plt.title('TrueSkill σ Changes Over Time')

plt.legend()

plt.grid(True, alpha=0.3)

plt.tight_layout()

# Save the figure

project_root = os.path.abspath(os.path.join(os.path.dirname(__file__), '..', '..'))

output_dir = os.path.join(project_root, 'static', 'images', 'elote', '04-trueskill-rating-system')

os.makedirs(output_dir, exist_ok=True)

plt.savefig(os.path.join(output_dir, 'conservative_updates.png'))

plt.close()

This visualization shows how TrueSkill updates ratings differently based on uncertainty. The new player’s rating changes more dramatically than the experienced player’s, even though they have the same outcomes. This is because TrueSkill is more cautious with established players (low σ) than with new players (high σ).

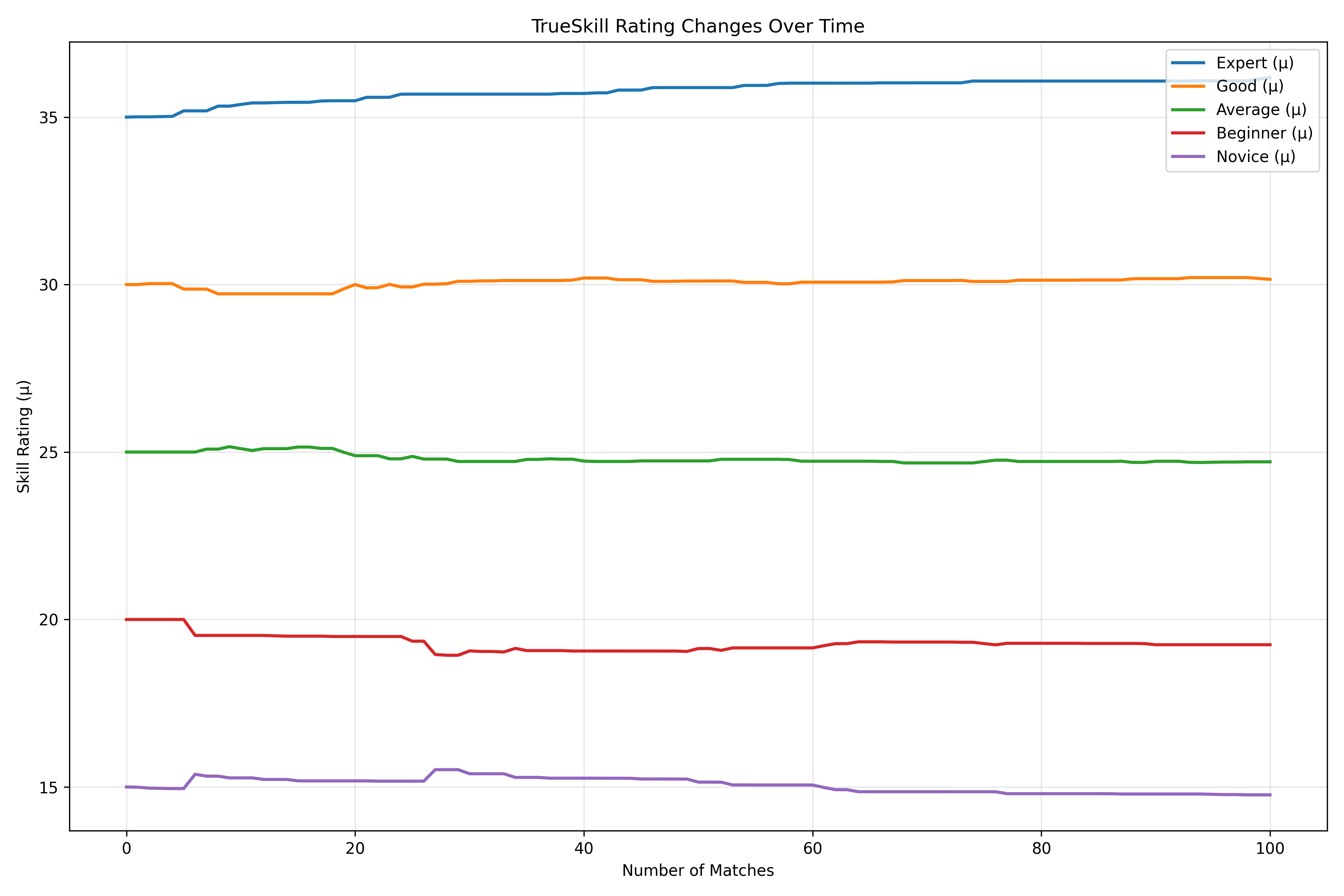

Simulating a Tournament with TrueSkill

Let’s see how TrueSkill performs in a tournament setting:

import random

import numpy as np

import matplotlib.pyplot as plt

import os

# Create competitors with different skill levels

skill_levels = {

"Expert": 35,

"Good": 30,

"Average": 25,

"Beginner": 20,

"Novice": 15

}

# Create competitors

competitors = {}

for name, skill in skill_levels.items():

competitors[name] = TrueSkillCompetitor(mu=skill, sigma=8.33)

# Function to simulate matches based on true skill

def simulate_comparison(a, b):

# Add some randomness to true skill

a_performance = skill_levels[a] + random.normalvariate(0, 3)

b_performance = skill_levels[b] + random.normalvariate(0, 3)

return a_performance > b_performance

# Create all possible matchups

matchups = [(a, b) for a in skill_levels for b in skill_levels if a != b]

# Track ratings over time

rating_history = {name: [(competitors[name].mu, competitors[name].sigma)] for name in skill_levels}

# Run the tournament

for i in range(100): # 100 matches

# Pick a random matchup

a, b = random.choice(matchups)

# Determine the winner based on true skill

if simulate_comparison(a, b):

competitors[a].beat(competitors[b])

else:

competitors[b].beat(competitors[a])

# Record ratings after every 20 matches

if (i+1) % 20 == 0:

print(f"After {i+1} matches:")

for name in skill_levels:

mu = competitors[name].mu

sigma = competitors[name].sigma

print(f"{name}: μ = {mu:.2f}, σ = {sigma:.2f}")

rating_history[name].append((mu, sigma))

# Plot the rating changes

plt.figure(figsize=(12, 8))

# Plot μ changes

plt.subplot(2, 1, 1)

for name, history in rating_history.items():

plt.plot([h[0] for h in history], label=f"{name}")

plt.xlabel('Tournament Progress (20-match intervals)')

plt.ylabel('μ (Skill Estimate)')

plt.title('TrueSkill μ Changes During Tournament')

plt.legend()

plt.grid(True, alpha=0.3)

# Plot σ changes

plt.subplot(2, 1, 2)

for name, history in rating_history.items():

plt.plot([h[1] for h in history], label=f"{name}")

plt.xlabel('Tournament Progress (20-match intervals)')

plt.ylabel('σ (Uncertainty)')

plt.title('TrueSkill σ Changes During Tournament')

plt.legend()

plt.grid(True, alpha=0.3)

plt.tight_layout()

# Save the figure

plt.savefig(os.path.join(output_dir, 'sigma_changes.png'))

plt.close()

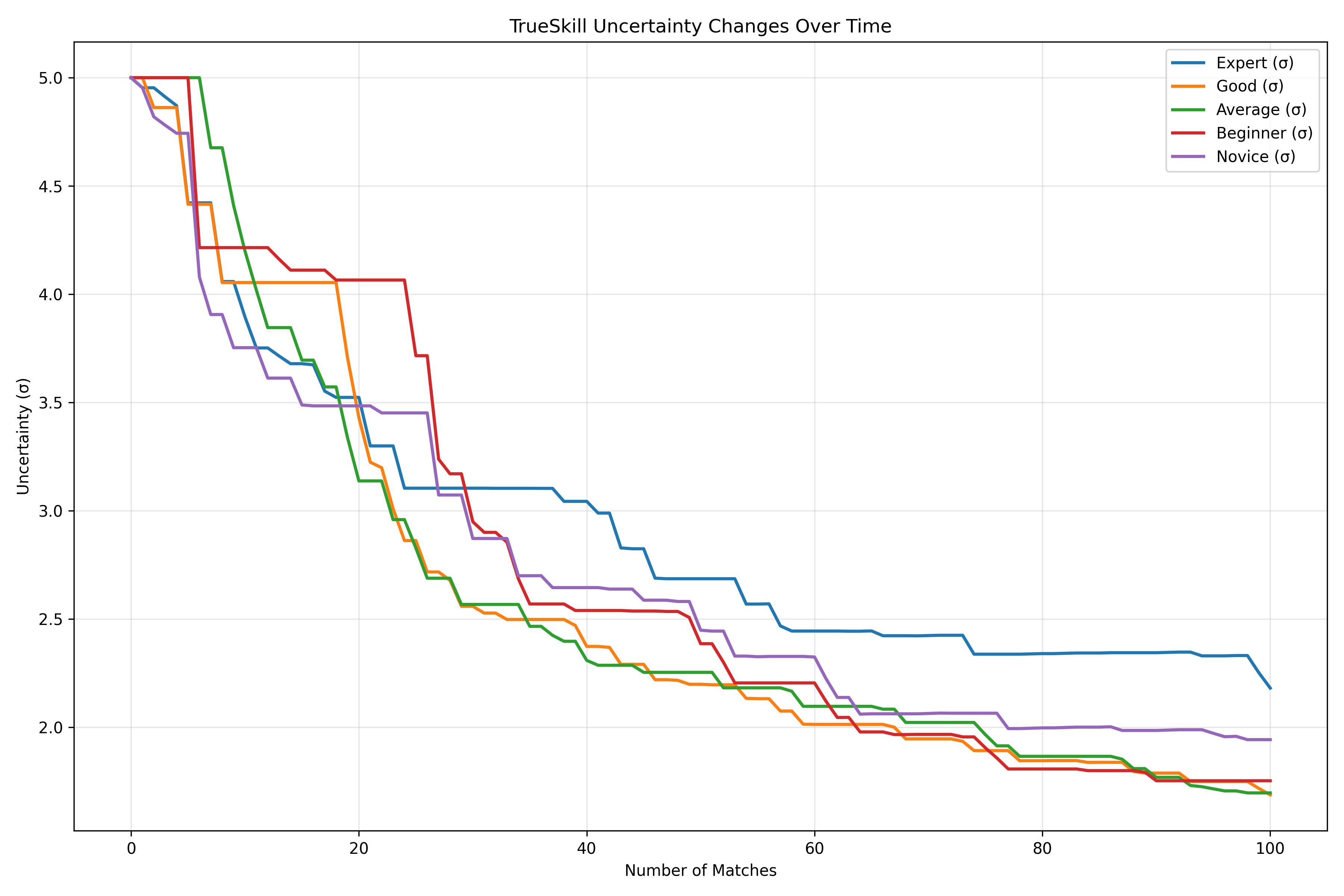

This simulation shows how TrueSkill ratings evolve during a tournament. Notice how the uncertainty (σ) decreases for all players as more matches are played, while the skill estimates (μ) converge toward the true skill levels.

Real-World Example: Valorant Player Rankings

Let’s use TrueSkill to rank players in a competitive game like Valorant, where different player roles can have synergies:

import random

import matplotlib.pyplot as plt

import numpy as np

import os

# Valorant players with different roles

valorant_players = [

"Sentinel_Sam", # Defensive specialist

"Duelist_Dana", # Entry fragger

"Controller_Chris", # Smoke/vision control

"Initiator_Ivy", # Information gatherer

"Flex_Felix", # Versatile player

"Sniper_Sarah", # AWP specialist

"Lurker_Larry", # Flanker

"IGL_Ian" # In-game leader

]

# Simulate player matchups with role synergies

def simulate_comparison(a, b):

# Base skill levels

base_skills = {

"Sentinel_Sam": 85,

"Duelist_Dana": 90,

"Controller_Chris": 82,

"Initiator_Ivy": 88,

"Flex_Felix": 86,

"Sniper_Sarah": 92,

"Lurker_Larry": 84,

"IGL_Ian": 80 # Lower mechanical skill but great leadership

}

# Role synergy bonuses (simplified)

role_synergies = {

"Sentinel_Sam": ["Controller_Chris", "IGL_Ian"],

"Duelist_Dana": ["Initiator_Ivy", "Controller_Chris"],

"Controller_Chris": ["Duelist_Dana", "Sentinel_Sam"],

"Initiator_Ivy": ["Duelist_Dana", "Lurker_Larry"],

"Flex_Felix": ["IGL_Ian", "Sniper_Sarah"],

"Sniper_Sarah": ["Sentinel_Sam", "Flex_Felix"],

"Lurker_Larry": ["Initiator_Ivy", "IGL_Ian"],

"IGL_Ian": ["Flex_Felix", "Sentinel_Sam", "Lurker_Larry"]

}

# Calculate synergy bonus

a_bonus = 5 if b in role_synergies[a] else 0

b_bonus = 5 if a in role_synergies[b] else 0

# Simulate performance with some randomness

a_performance = base_skills[a] + a_bonus + random.uniform(-10, 10)

b_performance = base_skills[b] + b_bonus + random.uniform(-10, 10)

return a_performance > b_performance

# Create matchups

matchups = []

for _ in range(100): # 100 random matchups

a = random.choice(valorant_players)

b = random.choice([p for p in valorant_players if p != a])

matchups.append((a, b))

# Create competitors

competitors = {player: TrueSkillCompetitor() for player in valorant_players}

# Run the tournament

for player_a, player_b in matchups:

if simulate_comparison(player_a, player_b):

competitors[player_a].beat(competitors[player_b])

else:

competitors[player_b].beat(competitors[player_a])

# Display rankings

print("Valorant Player Rankings (TrueSkill):")

sorted_players = sorted(competitors.items(), key=lambda x: x[1].mu - 3*x[1].sigma, reverse=True)

for i, (player, competitor) in enumerate(sorted_players):

print(f"{i+1}. {player}: μ={competitor.mu:.2f}, σ={competitor.sigma:.2f}, Rating={competitor.mu - 3*competitor.sigma:.2f}")

# Compare different ranking methods

ranking_methods = {

"μ only": [(p, c.mu) for p, c in competitors.items()],

"μ - σ": [(p, c.mu - c.sigma) for p, c in competitors.items()],

"μ - 2σ": [(p, c.mu - 2*c.sigma) for p, c in competitors.items()],

"μ - 3σ": [(p, c.mu - 3*c.sigma) for p, c in competitors.items()]

}

# Sort each ranking method

for method, ratings in ranking_methods.items():

ranking_methods[method] = sorted(ratings, key=lambda x: x[1], reverse=True)

# Plot the different rankings

plt.figure(figsize=(12, 8))

methods = list(ranking_methods.keys())

x = np.arange(len(valorant_players))

width = 0.2

offsets = np.linspace(-0.3, 0.3, len(methods))

for i, method in enumerate(methods):

# Get ranks (position in sorted list)

ranks = {p: r+1 for r, (p, _) in enumerate(ranking_methods[method])}

# Plot in original player order for consistency

plt.bar(x + offsets[i], [ranks[p] for p in valorant_players], width, label=method)

plt.xlabel('Players')

plt.ylabel('Rank (lower is better)')

plt.title('TrueSkill: Comparing Different Ranking Methods')

plt.xticks(x, valorant_players, rotation=45)

plt.yticks(np.arange(1, len(valorant_players)+1))

plt.gca().invert_yaxis() # Invert y-axis so rank 1 is at the top

plt.legend()

plt.tight_layout()

# Save the figure

plt.savefig(os.path.join(output_dir, 'ranking_methods.png'))

plt.close()

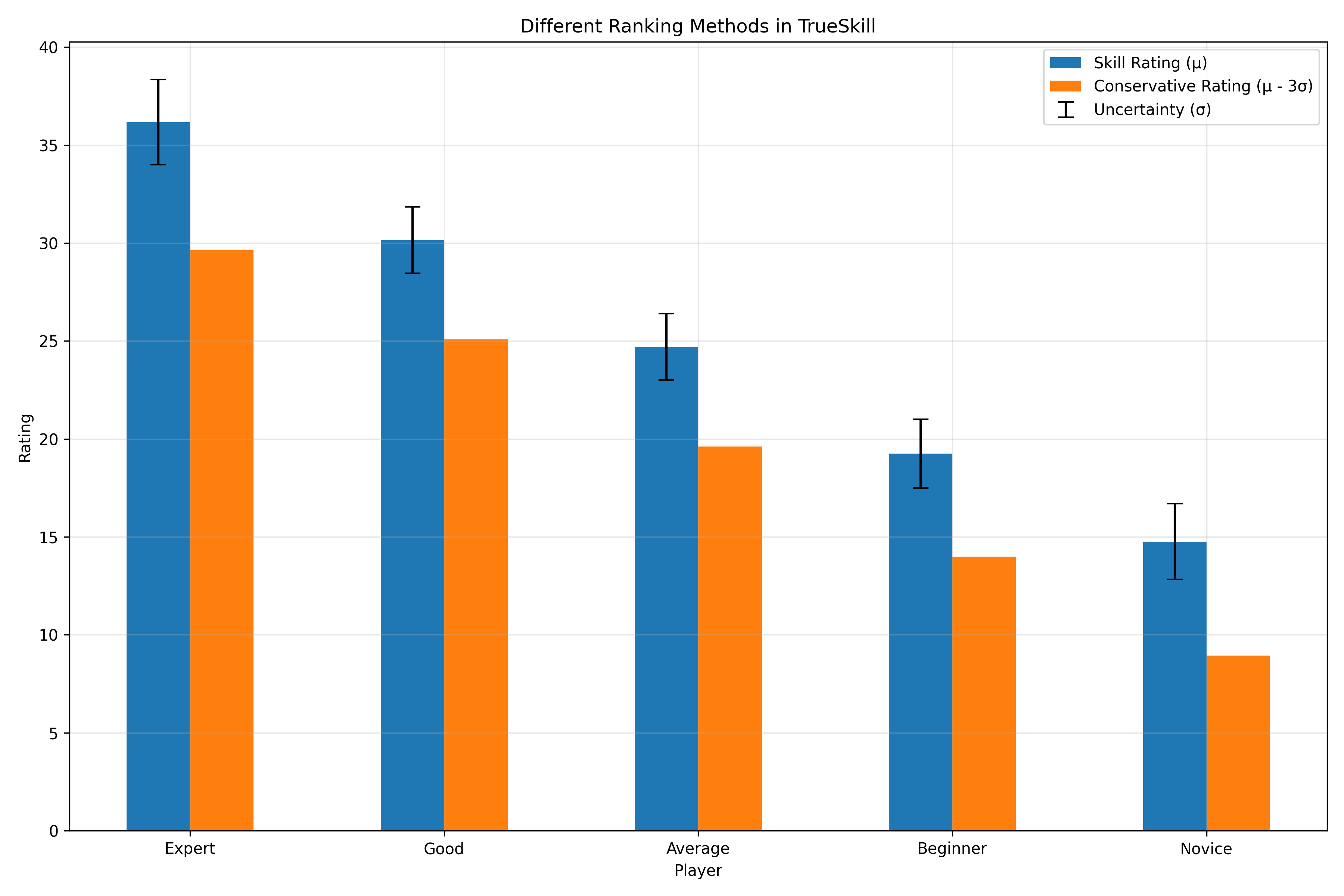

This example shows how TrueSkill can be used to rank players in a competitive game, taking into account different roles and synergies. The visualization compares different ranking methods, from using just μ (optimistic) to μ - 3σ (conservative).

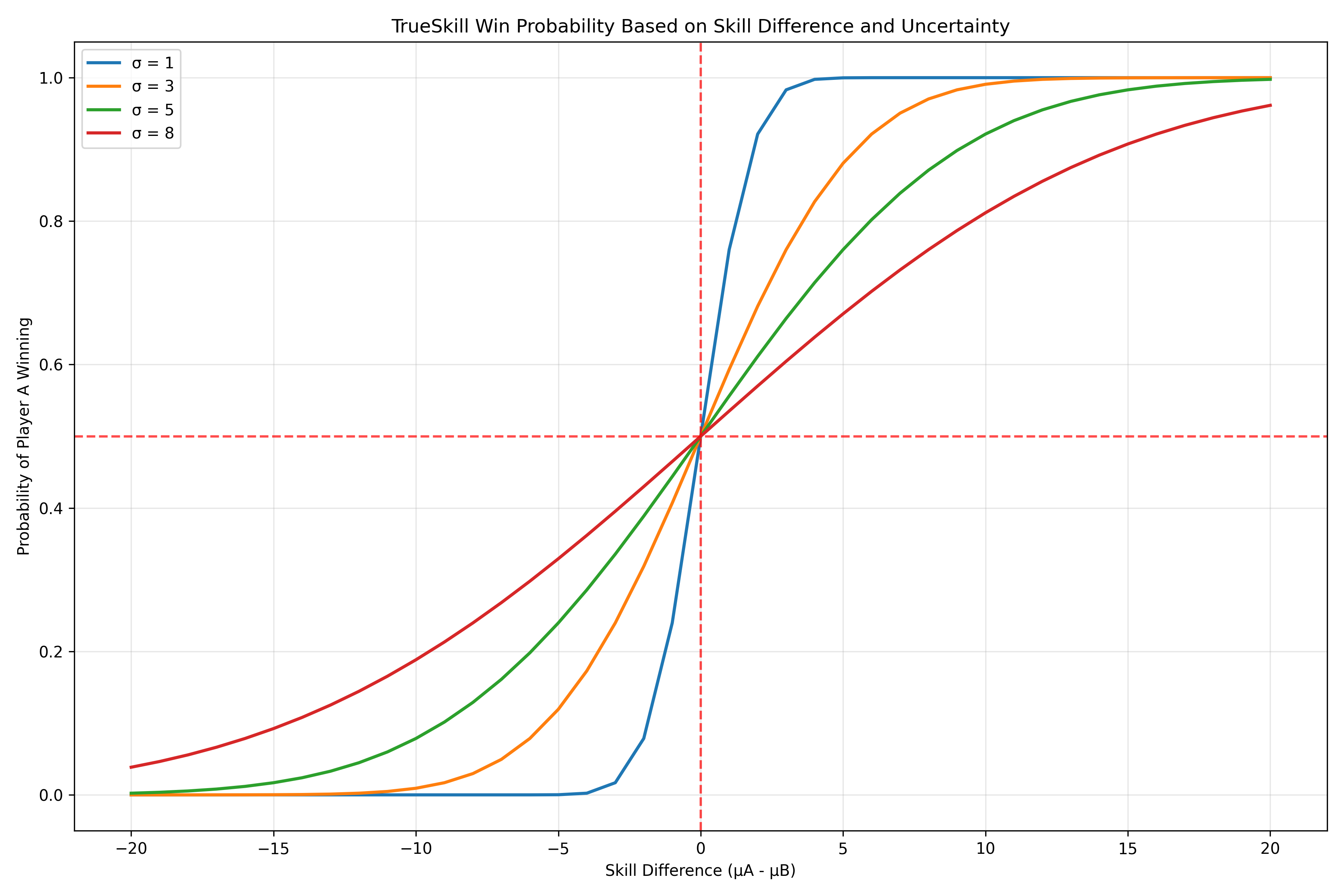

Win Probability in TrueSkill

TrueSkill can also calculate the probability of one player beating another:

import matplotlib.pyplot as plt

import numpy as np

import os

# Create a range of skill differences

mu_diffs = np.arange(-15, 16, 1)

sigma_values = [1, 2, 4, 8]

win_probs = {}

# Calculate win probability for different sigma values

for sigma in sigma_values:

win_probs[sigma] = []

for mu_diff in mu_diffs:

player_a = TrueSkillCompetitor(mu=25, sigma=sigma)

player_b = TrueSkillCompetitor(mu=25 + mu_diff, sigma=sigma)

win_probs[sigma].append(player_a.expected_score(player_b))

# Plot the results

plt.figure(figsize=(10, 6))

for sigma, probs in win_probs.items():

plt.plot(mu_diffs, probs, label=f'σ = {sigma}')

plt.axhline(y=0.5, color='r', linestyle='--', alpha=0.5)

plt.axvline(x=0, color='r', linestyle='--', alpha=0.5)

plt.grid(True, alpha=0.3)

plt.xlabel('μ Difference (Player A - Player B)')

plt.ylabel('Probability of Player A Winning')

plt.title('TrueSkill Win Probability vs. Skill Difference')

plt.legend()

# Save the figure

plt.savefig(os.path.join(output_dir, 'win_probability.png'))

plt.close()

# Now let's look at rating changes

mu_diffs = np.arange(-15, 16, 1)

rating_changes = []

for mu_diff in mu_diffs:

player_a = TrueSkillCompetitor(mu=25, sigma=4)

player_b = TrueSkillCompetitor(mu=25 + mu_diff, sigma=4)

# Record initial rating

initial_mu = player_a.mu

# Player A wins

player_a.beat(player_b)

# Record rating change

rating_changes.append(player_a.mu - initial_mu)

# Plot the results

plt.figure(figsize=(10, 6))

plt.plot(mu_diffs, rating_changes)

plt.axhline(y=0, color='r', linestyle='--', alpha=0.5)

plt.axvline(x=0, color='r', linestyle='--', alpha=0.5)

plt.grid(True, alpha=0.3)

plt.xlabel('μ Difference (Opponent - Player)')

plt.ylabel('μ Change After Win')

plt.title('TrueSkill Rating Change vs. Skill Difference')

# Save the figure

plt.savefig(os.path.join(output_dir, 'trueskill_rating_changes.png'))

plt.close()

These visualizations show how TrueSkill calculates win probabilities and rating changes. Notice how the uncertainty (σ) affects the win probability curve - higher uncertainty leads to a flatter curve, reflecting less confidence in the outcome prediction.

Pros and Cons of TrueSkill

Pros:

- Uncertainty tracking: Explicitly models confidence in skill estimates

- Fast convergence: Reaches accurate ratings with fewer games

- Team support: Designed for team games, not just 1v1

- Conservative matchmaking: Prevents frustrating mismatches

- Bayesian foundation: Mathematically rigorous approach

Cons:

- Complexity: More complex than Elo or Glicko

- Computational cost: More expensive to calculate

- Less intuitive: Harder to explain to non-technical users

- Proprietary origins: Originally developed as a proprietary system

- Parameter sensitivity: Results can be sensitive to parameter choices

When to Use TrueSkill

TrueSkill is best when:

- You need to rate players after very few games

- You’re dealing with team-based competitions

- You want to create balanced matchmaking

- You need to handle matches with more than two players/teams

- You have the computational resources for more complex calculations

Conclusion: The Modern Bayesian Approach

TrueSkill represents a significant advancement in rating system design, bringing Bayesian methods to bear on the problem of skill estimation. Its sophisticated uncertainty handling makes it particularly well-suited for modern gaming environments, where players expect fair matchmaking from their very first game.

While more complex than earlier systems, TrueSkill’s benefits often outweigh its costs, especially in contexts where player retention depends on creating balanced, enjoyable matches.

In our next post, we’ll explore the ECF (English Chess Federation) rating system, which takes a completely different approach to the rating problem.

Until then, may your μ rise and your σ fall!

Stay in the loop

Get notified when I publish new posts. No spam, unsubscribe anytime.